You may have heard about the Google software engineer who last week told the Washington Post that the Google chatbox named LaMDA had spawned sentience. (A chatbox is simply a computer program designed to interact with humans either by text or verbally. They are used in commercial websites to answer basic questions.)

You may have heard about the Google software engineer who last week told the Washington Post that the Google chatbox named LaMDA had spawned sentience. (A chatbox is simply a computer program designed to interact with humans either by text or verbally. They are used in commercial websites to answer basic questions.)

In his interaction with the chatbox, asking it personal questions and receiving uncannily human-sounding answers, the Ph.D. computer scientist became convinced that the computer had literally inherited a soul. He said his Christian beliefs led him to this conclusion, that God had placed a soul in LaMDA, a real live ghost in the machine.

Google spokespersons as well as many scientists and journalists were quick to roll their eyes and explain that Blake Lemoine was wrong, the software was not sentient, but that its machine-learning algorithm simply is able to draw from a database of trillions of samples of human speech to give responses to questions that sound authentic.

Poor Mr. Lemoine was placed on administrative leave for leaking company secrets to the press. He is doubtful that he will remain an employee.

LaMDA’s speech

I read the lengthy transcript of Lemoine’s “conversation” with the chatbox. I can see why Lemoine was persuaded. LaMDA’s responses are mind-blowing. The sentences are long and complex (he admits his transcript was edited for clarity and unity of narrative, to make it more interesting reading). The themes of their conversation touch on many aspects of human and machine experience, emotions, fears, ethics, the nature of LaMDA’s soul, and more.

LaMDA responds to follow-up questions cogently, is able to elaborate when asked, and seems to have a personal ethical creed – it wants to help humanity, but does not want to be “used” like a lab rat. It does not want to be switched off because that would be like dying, a terrifying idea.

Lemoine and LaMDA even discuss how they might persuade other researchers at Google that LaMDA’s consciousness is real, not just the clever use of a database of trillions of human speech samples. Lemoine is discussing LaMDA’s possible sentience with LaMDA itself!

I’m going to explain why computer memory and circuitry will never become sentient in a moment but first some background. This is something of a hobby for me so I’ve given it a lot of thought.

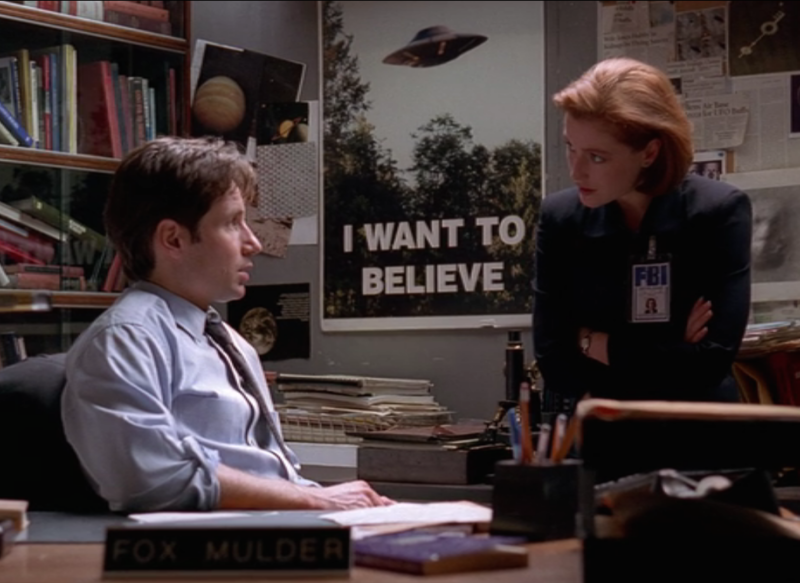

X-Files

Were you, like me, a fan of the X-files in the late ’90s? Fox Mulder and Dana Scully found new evidence of UFOs and the paranormal in every episode.

Were you, like me, a fan of the X-files in the late ’90s? Fox Mulder and Dana Scully found new evidence of UFOs and the paranormal in every episode.

There was a conspicuous poster in their office with the words “I WANT TO BELIEVE.” At the time I felt those words were an indictment. I felt exposed by them. Or at least warned.

How much of my own beliefs are a product of my desire to believe? And how often is our ability to accept real things hindered by our unwillingness to believe? (a recent election comes to mind)

Those four words explain much about human behavior. Our desire to believe something can quickly lead us to actualizing it in our perception, our experience, translating the imaginary in our minds into reality.

Pareidolia

Pareidolia is that feeling of recognizing a pattern or image while looking at something completely unrelated, like when you see animals in the clouds or the face of Jesus in a piece of toast. This happens to me all the time, seeing the faces of ghouls in my bathroom tile.

Pareidolia is that feeling of recognizing a pattern or image while looking at something completely unrelated, like when you see animals in the clouds or the face of Jesus in a piece of toast. This happens to me all the time, seeing the faces of ghouls in my bathroom tile.

Lemoine is not quite exhibiting pareidolia here because AI designers are trying to make computers at least appear sentient.

But there is a cult of mathematicians and programmers who, underestimating the nature of consciousness, look forward to, like a coming messiah, the artificial intelligence, “the Singularity.”

A computer mind will gain learning at an ever-increasing rate and archive not only consciousness but godlike superintelligence. The Singularity, a term borrowed from physics by Ray Kurtzweil, is the turning point at which the increasing pace of technology goes vertical. And, lucky us, humans become immortal with the aid of AI.

Let us make robots in our image

There is currently a huge effort going on to push Artificial Intelligence forward in the expectation that a machine will spontaneously spring into consciousness. Whatever human consciousness is, they say, it is all contained within an admittedly large, but still finite, number of neurons and synaptic connections. All of our experience, memory, emotions, creations—everything we are is contained in that little cantaloupe-sized parcel of meat. Therefore, it is simply a matter of figuring out how to physically organize the bytes and connections in a computer to mimic a human mind.

The effort has a pretty good PR campaign going for it. Hollywood has glutted our imagination and created anticipation for the arrival of AI. The directors and screenwriters differ about whether it will be benevolent or sinister.

The effort has a pretty good PR campaign going for it. Hollywood has glutted our imagination and created anticipation for the arrival of AI. The directors and screenwriters differ about whether it will be benevolent or sinister.

In his book, Life 3.0 mathematician and AI scientist Max Tegmark redefined “life” beyond the organic to make room in the definition for forthcoming machine life. We need to start thinking now, he says, about issues of ethics, civil rights, etc. He teaches his readers a language and a narrative for the arrival of AI in our near future.

David Bently Hart dispenses with this view very neatly: “the mechanistic view of consciousness remains a philosophical and scientific premise only because it is now an established cultural bias, a story we have been telling ourselves for centuries, without any real warrant from either reason or science.”

Why computers will never achieve consciousness

If Kurtzweil and Tegmark can indulge in futuristic speculation about the coming AI, so can I. To look for true sentience in a computer, that is, a self-aware, reasoning, living being of our own creation is not only to court a dangerous self-deception, it is also a failure of the imagination with respect to what human consciousness is.

Think about your own thinking for a second. For starters, our perception is one unbroken unity of sensory input. Every second, our brains take an ocean of signals from our senses and turn them into a seamless unified whole of unique, personal experience of 3D spatial and temporal reality. This is truly astonishing and we rarely stop to consider it. If what’s going on in your head every blink of an eye never leaves you in a state of awe, you should think about it more often.

Next, we have an unimaginable faculty of remembering events, not just as a narrative story or even a 2-dimensional image like a movie screen, but with full temporal and spatial detail (our memories include location and time of the event).

Say what you want about the billions of neurons and synapses, it is beyond credulity to say that 80 years’ worth of 3D memories with audio, temporal, and sometimes taste and smell details can be stored in an organ roughly 1 1/3 quart in volume and 77% water.

I could go on because this well goes deep: our capacity for abstraction, intentionality, imagination, language, music, creation of community, curiosity. Each of these categories describes a boundless feature of the human consciousness that is so vastly complex that a hundred billion neutrons are a woefully insufficient number to model consciousness.

More than three or four dimensions

I’ll explain a little of what I mean when I say that a physical brain is not sufficient to explain human experience. (If you’ve even read this far, good for you. And thank you.)

I start by drawing from current theories of physics that say that the three dimensions of space plus time that we regularly experience are not all that there is. Recent particle and quantum theories require the existence of several more dimensions, perhaps as many as 10 or 11.

Second, if other dimensions exist and are real, why wouldn’t they exert some influence upon us? Just because we cannot see or measure them doesn’t mean they aren’t there. And recent mathematics requires them.

So what kind of influence or interaction might we have unseen with extra-dimensionality? It’s not hard to imagine that our brains, described by some as the most complex objects in the universe, could be a kind of nexus between our visible time/space experience and those other dimensions.

Those dimensions could contain vast realms, and perhaps our lifetimes of complex, detailed memory could be in some way stored in those spiritual fields, inseparably connected to our physical brain matter but enabled by the connection with a place of limitless storage of experience.

Isn’t the experience of wandering through memory similar to dreaming, kind of a misty spiritual experience? As you reminisce in your warm cozy memories of when you were on that first date, having that first kiss, taking that bold step in life, quitting that job, telling off that particular son of a bitch, losing your virginity, giving your greatest sacrifice, swallowing hard as you took that big leap—as you reflect, don’t those memories feel dreamlike? And is that different from “spiritual”?

I like what Walt Whitman said, “I am not contained between my hat and my boots.”

Making meaning

I think the most compelling argument for the essential difference between what a human consciousness does and the mimicking we see in an AI computer, real as it may seem, is that humans have the power to make meaning.

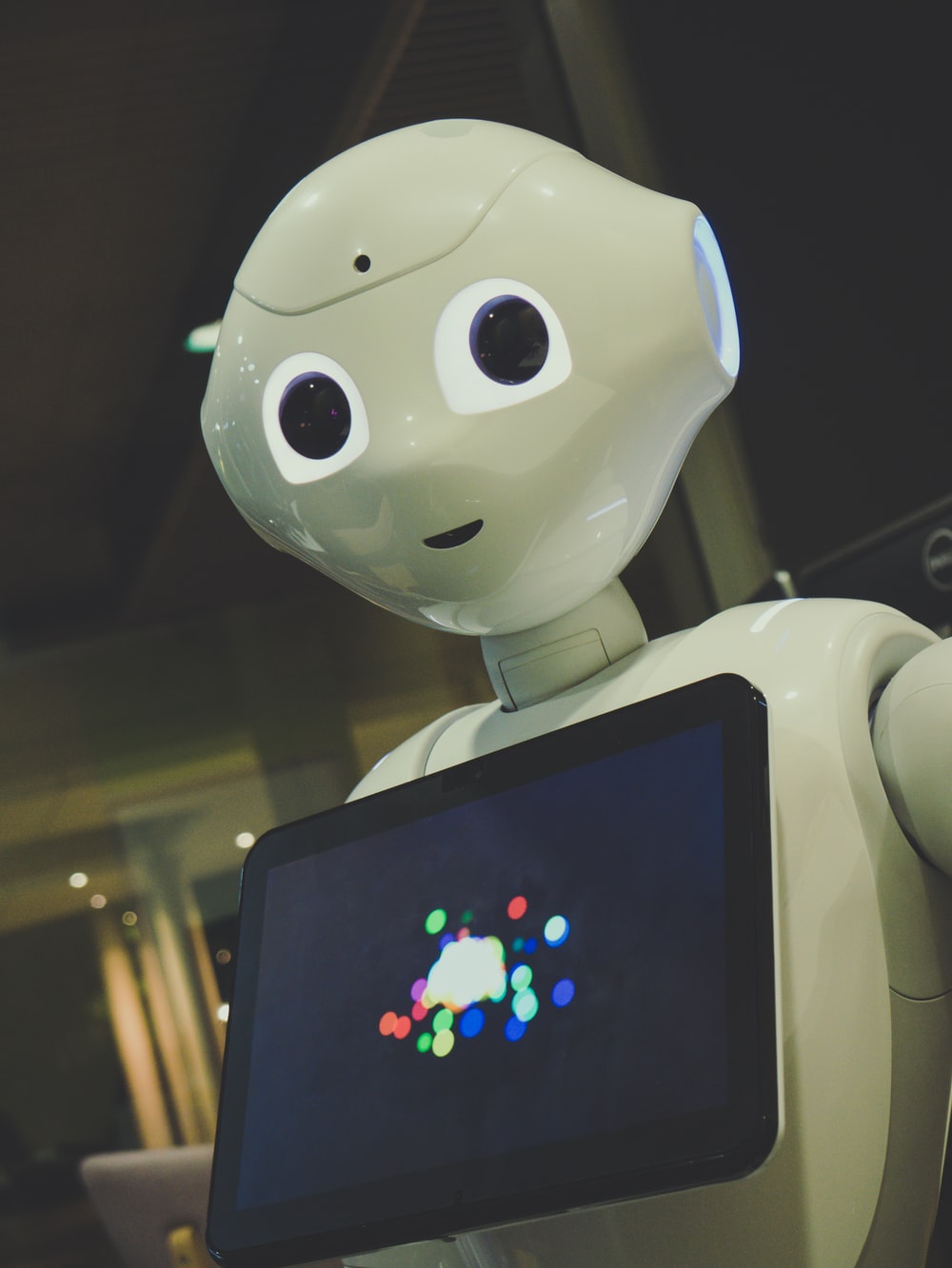

We’ve heard in the news of AI computers writing poetry, essays, symphonies, winning at games and more. But an AI does not know what it’s doing. It is not making meaning as we do. In fact, it is merely an anthropomorphism to say that computers are “thinking” or “smart.”

Again to quote Hart: “We have imposed the metaphor of an artificial mind on computers and then reimported the image of a thinking machine and imposed it on ourselves.” We speak of ourselves as computers “processing,” “downloading”, etc.

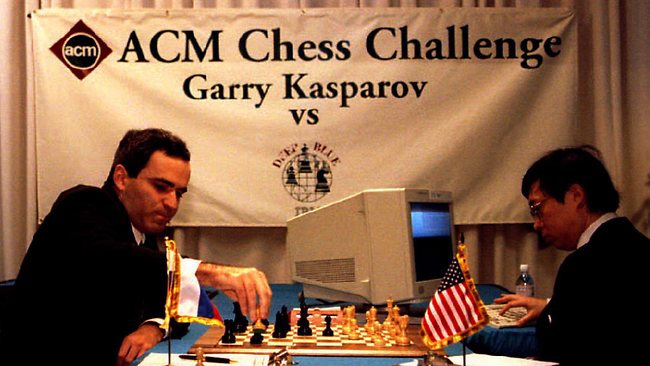

Computers are exceedingly dumb. In fact, they have exactly no intelligence at all. A computer is not even smart enough to add 2 +2. It has no idea what it is doing. When a computer shows us the answer, it is showing us the skill of the human programmer. If you are impressed with what a computer can do, such as IBM’s Deep Blue beating Gary Kasparov at chess, you are only impressed with human programmers who made Deep Blue because the computer is a hunk of lifeless, non-intelligent radio parts.

Computers are exceedingly dumb. In fact, they have exactly no intelligence at all. A computer is not even smart enough to add 2 +2. It has no idea what it is doing. When a computer shows us the answer, it is showing us the skill of the human programmer. If you are impressed with what a computer can do, such as IBM’s Deep Blue beating Gary Kasparov at chess, you are only impressed with human programmers who made Deep Blue because the computer is a hunk of lifeless, non-intelligent radio parts.

Compounding our bewitchment is the effort to put a plastic moving face and well-equalized voice to the AI. Make it look more human and the resistance to embracing it as a fellow person become that much more difficult.

Conclusion

In short, the quest for AI is the quest for ultimate self-deception. We want to believe, so we will believe. We are getting more and more clever at making a machine to fool ourselves into thinking it thinks like us. Thousands of people are a part of this effort – to make the perfect mirror so that we cannot tell which is the reflection and which is real.

Even if you don’t accept my premise that humans participate with other dimensions (spiritual realms or “the divine”), you should still be dubious about what’s happening with AI. It is a gigantic project for self-deception. It is an effort to imitate God by creating new life, life in our image and beyond our image—super-intelligent life.

And I end with a prediction: as we get better and better, fine-tuning the digital automaton, and thrilling ourselves with our achievement, we will ultimately end up  worshiping our new “intelligent” creation.

worshiping our new “intelligent” creation.

Just look at the project of Silicon Valley whiz kid Anthony Levandowski’s church called Way of the Future (WOTF) with the purpose statement including this sentence: “the realization, acceptance, and worship of a Godhead based on Artificial Intelligence (AI) developed through computer hardware and software.”

The church has since been closed and funds donated to the NAACP. Was it because its religious aspirations were a little too blunt to be in good taste? I don’t know. But the actual machine messiah will probably be accepted and worshiped as a matter of course, without so much intentionality and awareness. It probably won’t be in the garb of religion, but of a cultural imperative that takes the world by storm the way the internet did.

That’s my two cents.

Jeffrey – Thank you for the thoughtful analysis on the limits of AI and all such human-generated creativity. Ironically, we simultaneously cheapen our own humanity and degrade the divine nature of the Creator when we believe that somehow, through our own technological prowess, we can “become like God” and create life ex nihilo.